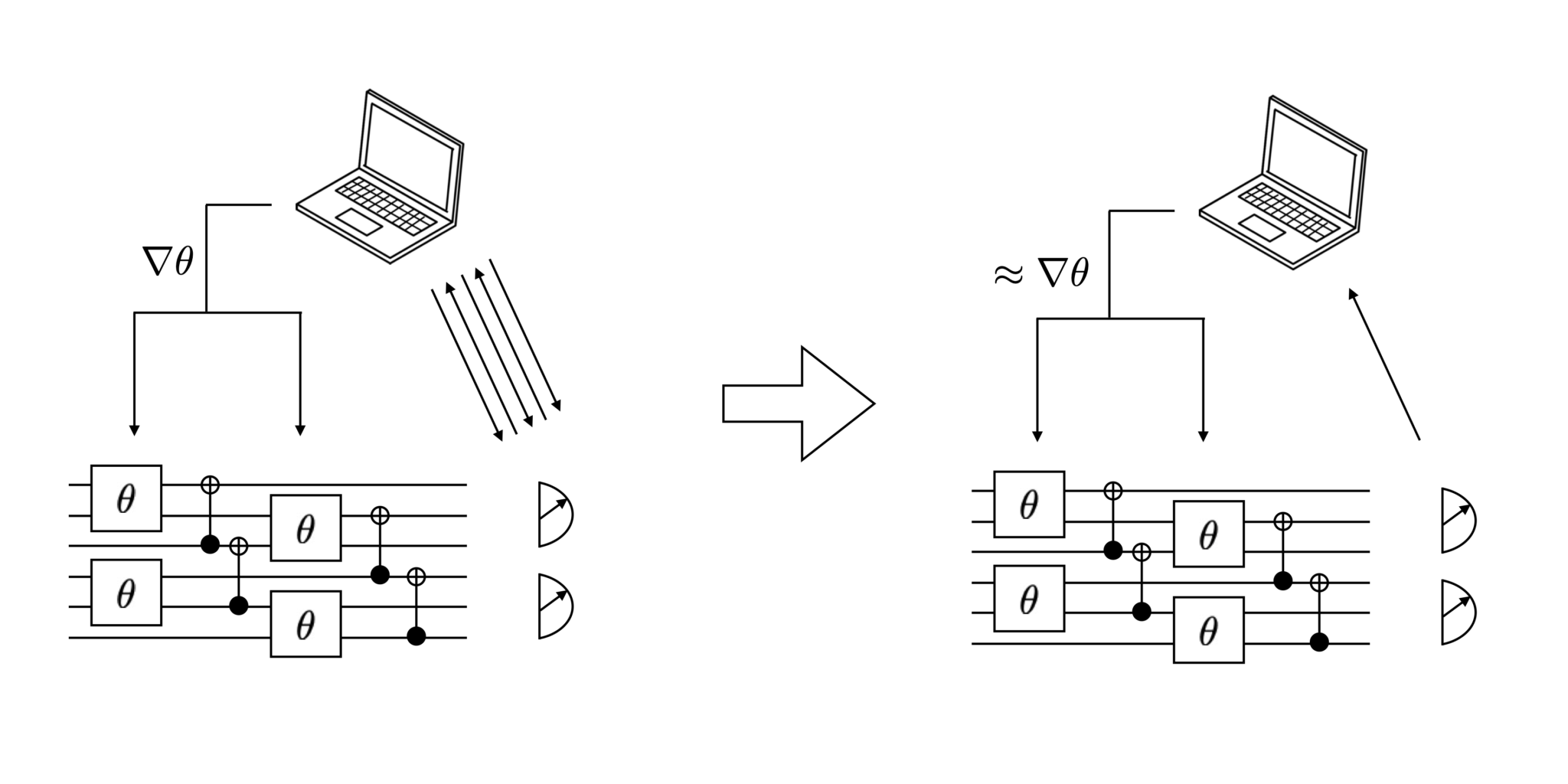

Possible parallel methods for training a neural network include splitting the data across multiple computational nodes and splitting the neural network itself across multiple nodes, known as Data Parallelism and Model Parallelism, respectively. Footnote 1 As the models and datasets grow, it is possible to not only improve the hardware of the computer (such as more memory, faster CPU, faster GPU Footnote 2, etc.) but to also increase the number of computers simultaneously training a single model. Thus, training neural networks in a parallel and distributed manner on large cluster computers or large shared memory supercomputers, is an active area of research. Massively powerful computers are required to efficiently train large neural networks on large amounts of data. Problems of this magnitude are common and thus researching parallel network optimization on distributed and parallel systems is highly important. For example, the ImageNet database AlexNet was trained on roughly 1.2 million images, and at the time achieved state of the art results. Additionally, neural networks benefit from training on Big Data, as typically more data produces more performant models. In the context of this paper, the effective training of these large complex networks is accomplished through the use of the computationally expensive process of backpropagation. Depending on the network’s architecture, each of these neurons is connected to a large number of other neurons, where each connection has a trainable weight parameter that determines how the network responds to input signals. Large neural networks can consist of dozens, hundreds or even thousands of layers each with thousands of artificial neurons. Training neural networks effectively and efficiently is an important component of Deep Learning. We provide experimental results that validate our work and show that our implementation can scale with respect to both dataset size and the number of compute nodes in the cluster. We overview how the HPCC systems platform provides the environment for distributed and parallel Deep Learning, how it provides a facility to work with third party open source libraries such as TensorFlow, and detail our use of third-party libraries and HPCC functionality for implementation. We use high-performance computing cluster (HPCC) systems as the underlying cluster environment for the implementation. In this paper, we present a novel distributed and parallel implementation of stochastic gradient descent (SGD) on a distributed cluster of commodity computers. Accelerating the training process of Deep Learning using cluster computers faces many challenges ranging from distributed optimizers to the large communication overhead specific to systems with off the shelf networking components. The size and complexity of the model combined with the size of the training dataset makes the training process very computationally and temporally expensive. As a byproduct, we also slightly refine the existing studies on the uniform convergence of gradients by showing its connection to Rademacher chaos complexities.Deep Learning is an increasingly important subdomain of artificial intelligence, which benefits from training on Big Data. We show that the complexity of SGD iterates grows in a controllable manner with respect to the iteration number, which sheds insights on how an implicit regularization can be achieved by tuning the number of passes to balance the computational and statistical errors. In this paper, we develop novel learning rates of SGD for nonconvex learning by presenting high-probability bounds for both computational and statistical errors. However, there is a lacking study to jointly consider the computational and statistical properties in a nonconvex learning setting. Computational and statistical properties are separately studied to understand the behavior of SGD in the literature.

Stochastic gradient descent (SGD) has become the method of choice for training highly complex and nonconvex models since it can not only recover good solutions to minimize training errors but also generalize well.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed